However, calculating probabilities like W can be very challenging. Using statistical probability is very useful for visualizing how a process occurs. That is to say, doubling the number of molecules doubles the entropy. It is clear from this equation that entropy is an extensive property and depends on the number of molecules.

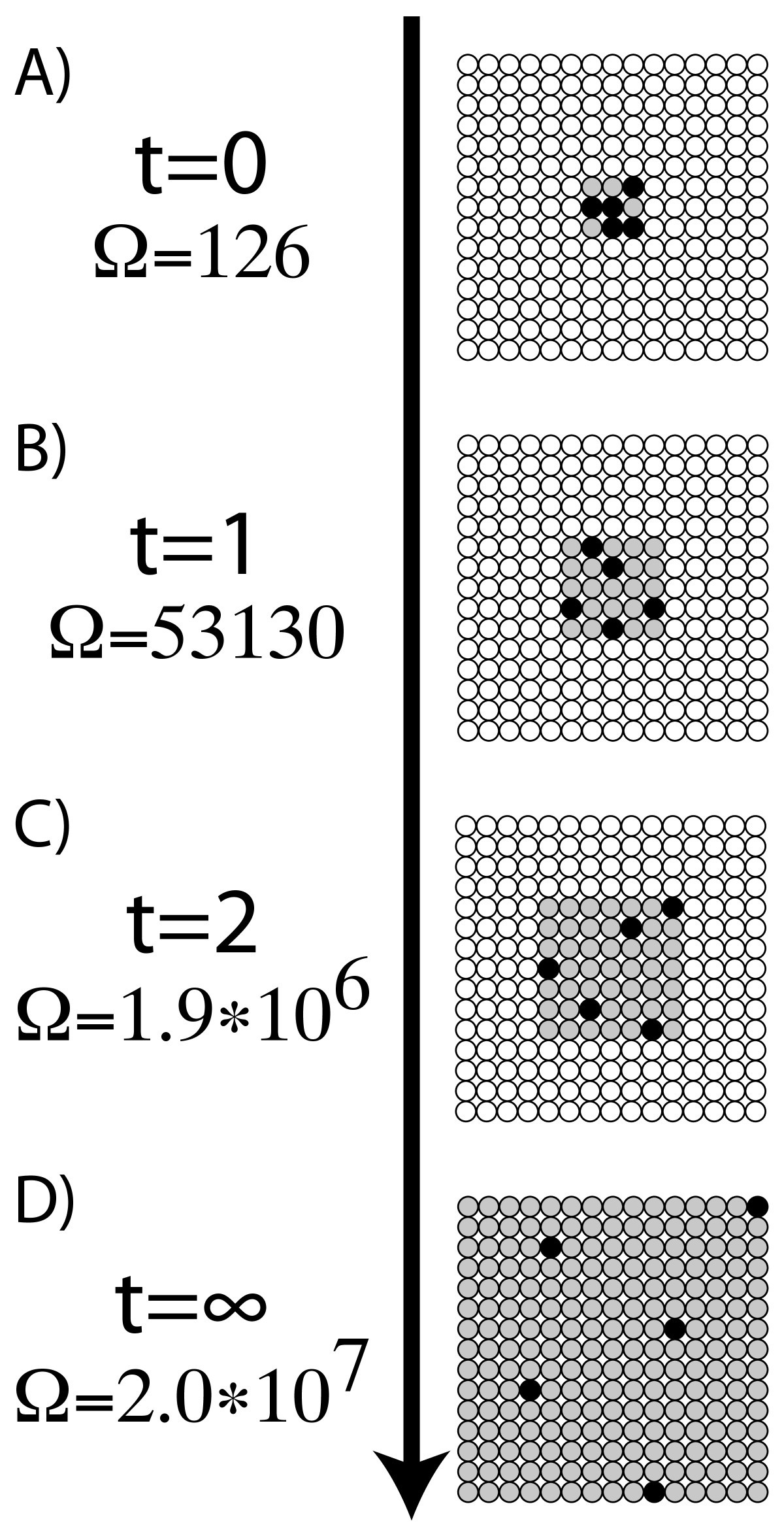

The above equation is known as Boltzmann Equation, named after Austrian physicist Ludwig Boltzmann. W: Number of microstates corresponding to a given macrostate The key assumption made here is that each possible outcome is equally probable, leading to the following equation: Using Statistical Probability: Boltzmann Equation It can be quantitatively measured in terms of a system’s statistical probabilities or other thermodynamic quantities. How to Calculate EntropyĮntropy is a qualitative measure of how much the energy of atoms and molecules spreads during a process. These attributes of entropy are essential for formulating the Second Law of Thermodynamics. A positive entropy means an increase in disorder. Generally, the combined entropy of the system and the surrounding for a spontaneous process increases. Entropy and the Second Law of ThermodynamicsĪ system at equilibrium does not undergo an entropy change because no net change is occurring. Entropy is often called the arrow of time because matter tends to move from order to disorder in isolated systems. Since entropy measures disorder, a highly ordered system has low entropy, and a highly disordered one has high entropy. It is an extensive property, meaning entropy depends on the amount of matter.

Entropy and the Second Law of ThermodynamicsĮntropy is a thermodynamic state function that measures the randomness or disorder of a system.If the happening process is at a constant temperature then entropy will be Furthermore, it includes the entropy of the system and the entropy of the surroundings.īesides, there are many equations to calculate entropy:ġ. Also, scientists have concluded that in a spontaneous process the entropy of process must increase. Moreover, the entropy of solid (particle are closely packed) is more in comparison to the gas (particles are free to move). Entropy FormulaĮntropy is a thermodynamic function that we use to measure uncertainty or disorder of a system. In addition, some microscope process is reversible. Besides, some other example of changeable phase is the melting of metals. On the other hand, blowing a building, frying an egg is an unalterable change.

Moreover, when the process is unalterable then the entropy will increase.įor example, watching a movie is a changeable process because you can watch the movie from backward. Also, even when the cyclic process is changeable then the entropy will not change. The second law of thermodynamics says that every process involves a cycle and the entropy of the system will either stay the same or increase. Get the huge list of Physics Formulas here The Second Law of Thermodynamics Furthermore, the more you increase the ball the more ways it can be arranged. So, now you can arrange the balls in two ways. After some time you put another ball on the table. Moreover, the question here is in how many ways you can arrange this ball? The answer is one. In another example, you grab a ball and put it on a table. So, what will happen next? We all know that the smell will spread in the entire room and the perfume molecule will eventually fill the room. Suppose you sprayed perfume in one corner of the room. Furthermore, we can understand it more easily with the help of an example. Moreover, the higher the entropy the more disordered the system will become. Entropy refers to the number of ways in which a system can be arranged.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed